ICLR 2026 is in one week! We have an exciting lineup of posters and orals. We also have six exciting keynotes covering a range of areas from core machine learning to robotics, neuroscience and AI for science!

Those who are attending can see the full schedule here.

Maja Matarić

The Challenges of Human-Centered AI and Robotics: What We Want, Need, and are Getting From Human-Machine Interaction

9:00 AM Thursday

Abstract: Language-based AI is now ubiquitous, and user expectations for intelligent machines are scaling along with it: we expect machines to understand us, predict our needs and wants, do what we enjoy and prefer, and adapt as we change our moods and minds, learn, grow, and age. Physical AI, in the form of robotics, is the next major AI challenge, and it is not ready to leap into our daily lives yet. While massive investment is focused on functional behavior of humanoid robots (perceiving the world, moving around, and manipulating objects), human-robot interaction (HRI) is relegated to an afterthought. It is assumed that once a robot can move around and do things, it will be useful and wanted, yet over 25 years of research in HRI tells us otherwise. While the needs for human-centered services continue to grow, research and development is minimal. This talk will discuss how bringing together robotics, AI, and machine learning for long-term user modeling, real-time multimodal behavioral signal processing, and affective computing is enabling machines to understand, interact, and adapt to users’ specific and ever-changing needs. We will overview methods and challenges of sparse and noisy heterogeneous, multi-modal, personal interaction data and of creating expressive agent and robot behavior toward understanding, coaching, motivating, and supporting a wide variety of user populations across the age span (infants, children, adults, elderly), ability span (typically developing, autism, anxiety, stroke, dementia), contexts (schools, therapy centers, homes), and deployment durations (from weeks to 6 months) through socially assistive robotics. We will discuss the challenges of understanding what we humans want from interactions with machines vs. what we need vs. what we are getting, and how those distinctions are shaping the future of not just AI and ML but society at large.

Speaker Bio: Maja Matarić is a Chaired and Distinguished Professor of Computer Science, (with appointments in Neuroscience and Pediatrics) at the University of Southern California, and Principal Scientist at Google DeepMind. Her PhD and MS in Computer Science and AI are from MIT, and her BS in Computer Science is from the University of Kansas. She is a member of the National Academy of Engineering and the American Academy of Arts and Sciences, fellow of AAAS, IEEE, AAAI, and ACM, recipient of the Presidential Award for Excellence in Science, Mathematics & Engineering Mentoring (from President Obama), Anita Borg Institute Women of Vision, ACM Athena Lecture, ACM Eugene Lawler, Mass Robotics Medal, NSF Career, MIT TR35 Innovation, and IEEE RAS Early Career Awards, and authored “The Robotics Primer” (MIT Press). She led the USC Viterbi K-12 STEM Center and actively mentors and empowers K-12 students, women, and other groups toward pursuing STEM careers. A pioneer of the field of socially assistive robotics, her research is developing human-machine interaction methods for personalized support for users with challenges, including autism, stroke, Alzheimer’s disease and other forms of dementia, anxiety, and other major health and wellness challenges. Her research group has conducted many of the first and still largest real-world studies in complex environments–including schools, nursing homes, retirement centers, and homes—to produce deep insights into complex human-machine interaction challenges with real-world users in situ.

Max Welling

From Physics to AI to Materials; A Journey from Foundations to Impact

1:45 PM Thursday

Abstract: Do we reward strange new, potentially paradigm-shifting ideas or do we focus on engineering, scaling and bold numbers? In this talk I will discuss how insights from physics have inspired me to develop new ideas for AI. In the first part of the talk I will argue that 10 years after the introduction of symmetries in deep learning, spontaneous symmetry breaking may be at the root of modern AI. I will argue that internal symmetries, or capsules, in the broken phase can propagate information over long spatiotemporal distances. As such they can act as memory channels and facilitate reasoning. In the second part of the talk I will discuss the consequences of breaking time-reversal symmetry, leading to information loss and entropy production. The mathematics describing such systems are virtually identical in probabilistic AI and stochastic thermodynamics, the modern theory of thermodynamic systems out of equilibrium. This leads to new insights and methods to e.g. estimate the free energy of a molecule binding to a target. Finally, I will describe my personal journey deploying these powerful AI tools through my startup CuspAI to solve some of society’s most urgent problems, such as sustainable energy and climate change. I conclude by stressing that we all have an important role to play in ensuring our tools are used responsibly. We cannot look away.

Speaker bio: Prof. Dr. Max Welling is a research chair in Machine Learning at the University of Amsterdam and a Distinguished Scientist at MSR. He is a fellow at the Canadian Institute for Advanced Research (CIFAR) and the European Lab for Learning and Intelligent Systems (ELLIS) where he also serves on the founding board. His previous appointments include VP at Qualcomm Technologies, professor at UC Irvine, postdoc at U. Toronto and UCL under supervision of prof. Geoffrey Hinton, and postdoc at Caltech under supervision of prof. Pietro Perona. He finished his PhD in theoretical high energy physics under supervision of Nobel laureate prof. Gerard ‘t Hooft.

Max Welling has served as associate editor in chief of IEEE TPAMI from 2011-2015, he serves on the advisory board of the Neurips foundation since 2015 and has been program chair and general chair of Neurips in 2013 and 2014 respectively. He was also program chair of AISTATS in 2009 and ECCV in 2016 and general chair of MIDL 2018. Max Welling is recipient of the ECCV Koenderink Prize in 2010 and the ICML Test of Time award in 2021. He co-directs the Amsterdam Machine Learning Lab (AMLab), the Qualcomm-UvA deep learning lab (QUvA), and the Bosch-UvA Deep Learning lab (Delta).

Percy Liang

Marin: Open Development of Frontier AI

9:00 AM Friday

Abstract: As AI capabilities skyrocket, openness plummets: the scientific community and broader public knows little of how frontier models (including open-weight models) are trained. I will describe Marin, a radically new way of doing model development, inspired by true open-source software. Every experiment is done in the open, and anyone can suggest ideas, review, and even run experiments through GitHub, providing a better way of doing science that improves on preregistration, reproducibility, and peer review. I will discuss a selection of scientific results that have emerged from Marin, including new optimizers and scaling laws. We hope that Marin will be a platform for the community to participate in the development of frontier AI.

Bio: Percy Liang is a Professor of Computer Science at Stanford University, co-founder of Together AI and Simile AI, and the creator of Marin, a platform for developing foundation models fully in the open. He has made a number of contributions in AI, including the SQuAD question answering dataset, the HELM benchmarking framework, generative agents, prefix tuning, and coining the term “foundation models”. His awards include the Presidential Early Career Award for Scientists and Engineers (2019), IJCAI Computers and Thought Award (2016), an NSF CAREER Award (2016), a Sloan Research Fellowship (2015), a Microsoft Research Faculty Fellowship (2014), and paper awards at ACL, EMNLP, ICML, COLT, ISMIR, CHI, UIST, and RSS.

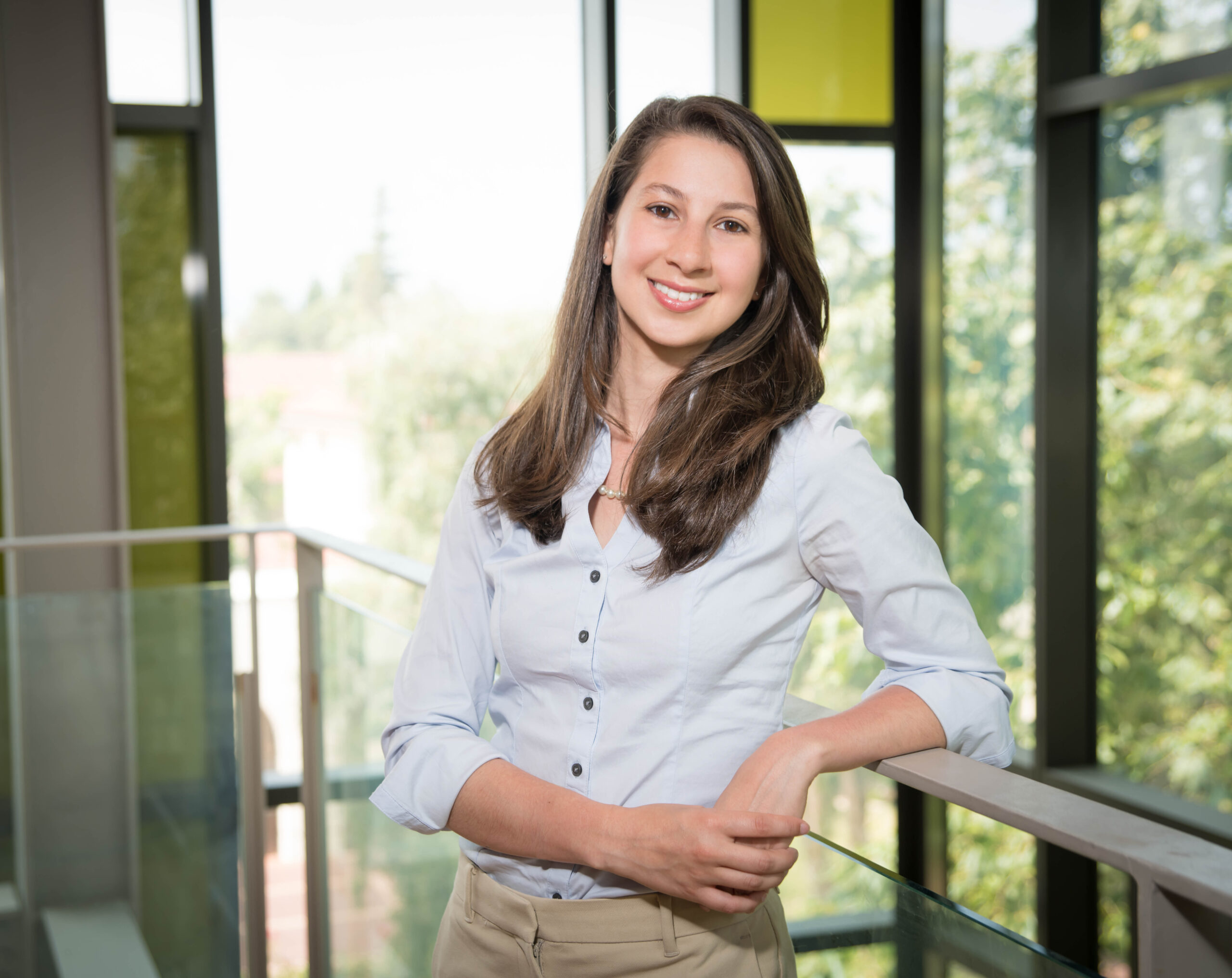

Katie Bouman

Images of the Hidden Universe

1:45 PM Friday

Abstract: Some of the most iconic images in modern science were never captured by a camera in the traditional sense. Instead, they were inferred from indirect and incomplete measurements, using a combination of physics, prior knowledge, and computation. In this talk, I will explore how physics and machine learning are working together to illuminate parts of the universe that are difficult – or even fundamentally impossible – to observe directly. I’ll begin with the story of black hole imaging, where theory long predicted what we should see, and where confidence came not from a single image, but from the consistency of features across many reconstructions of the same data. Along the way, I’ll show that this kind of inference is not unique to extreme astrophysics, but also underlies how we form images in familiar technologies we rely on every day, where images are similarly reconstructed from indirect measurements using models and assumptions. I’ll show that simple assumptions can take us far, but also where they begin to limit what we can learn. Incorporating richer assumptions through the help of machine learning allows us to extract more from the same data and explore a full range of possibilities that respects varying strengths of the expected physics. Finally, I will discuss how these ideas extend beyond black holes to other scientific imaging problems, including mapping the distribution of dark matter from subtle distortions in the shapes of galaxies due to gravitational lensing. Together, these examples illustrate how modern imaging increasingly relies on integrating physics and machine learning to extract meaningful information from fundamentally limited data to uncover our hidden universe.

Bio: Katherine L. (Katie) Bouman is a professor in the Computing and Mathematical Sciences, Electrical Engineering, and Astronomy Departments at the California Institute of Technology. Her work combines ideas from signal processing, computer vision, machine learning, and physics to find and exploit hidden signals for scientific discovery. Before joining Caltech, she was a postdoctoral fellow in the Harvard-Smithsonian Center for Astrophysics. She received her Ph.D. in the Computer Science and Artificial Intelligence Laboratory (CSAIL) at MIT in EECS, and her bachelor’s degree in Electrical Engineering from the University of Michigan. She is a Rosenberg Scholar, Heritage Medical Research Institute Investigator, recipient of the Royal Photographic Society Progress Medal, Electronic Imaging Scientist of the Year Award, PECASE Award, Sloan Fellowship, IEEE SPS Laplace Award, and co-recipient of the Breakthrough Prize in Fundamental Physics. As part of the Event Horizon Telescope Collaboration, she co-led the Imaging Working Group and acted as coordinator for papers concerning the first imaging of the M87* and Sagittarius A* black holes.

Karen Adolph

Learning while developing: How infants acquire intelligent behavior

9:00 AM Saturday

Abstract: Behavior is everything we do. With age and experience, infant behavior becomes more flexible, adaptive, and functional. More intelligent. How do infants acquire intelligent behavior? Babies are learning while developing. Advances in motor skills expand infants’ interactions with the environment—the parts of the environment they “touch” with eyes, hands, and body. In the course of everyday activity, infants acquire immense amounts of time-distributed, variable, error-filled practice for every type of foundational behavior that researchers study. Practice is largely spontaneous, self-motivated, and frequently not goal directed. Formal robot models suggest that infants’ natural practice regimen—replete with variability and errors—is optimally suited for building an intelligent behavioral system that responds adaptively to the constraints and opportunities of continually changing bodies and skills in an ever-changing world. I propose that open video sharing will speed progress toward understanding behavior and its development.

Bio: KAREN E. ADOLPH is Julius Silver Professor of Psychology and Neuroscience and Professor of Applied Psychology and Child and Adolescent Psychiatry at New York University. She uses observable motor behaviors and a variety of technologies (video, computer vision, motion tracking, instrumented floor, head-mounted eye tracking, EEG, etc.) to study developmental processes. Adolph directs the Databrary.org video library and maintains the Datavyu.org video-annotation tool. She is a Fellow of the American Psychological Association, Association for Psychological Science, and American Association for the Advancement of Science and Past-President of the International Congress on Infant Studies. She received the Kurt Koffka Medal, Cattell Sabbatical Award, APF Fantz Memorial Award, APA Boyd McCandless Award, ICIS Young Investigator Award, FIRST and MERIT awards from NICHD, and five teaching awards from NYU.

Pablo Arbeláez

Artificial Intelligence for Open Science

1:45 PM Saturday

Abstract: I will present our latest research on Artificial Intelligence for Open Science at the Center for AI at the Universidad de los Andes, Colombia (CinfonIA). We will focus on our ongoing collaborative projects in scientific disciplines such as robotic surgery, spatial transcriptomics, drug discovery, geology, and nature conservation.

Bio: Pablo Arbeláez obtained his Ph.D. in Applied Mathematics with honors from Paris-Dauphine University in 2005. From 2007 to 2014, he served as a senior research scientist with the Computer Vision Group at the University of California, Berkeley. In 2014, Pablo Arbeláez joined the faculty of the Department of Biomedical Engineering at Universidad de los Andes. Since 2020, he has been the director of the Center for Research and Formation in Artificial Intelligence (CinfonIA) at Universidad de los Andes, the first AI-focused academic center in Latin America, underscoring his commitment to transformative AI solutions and to empowering Latin American talent in the global AI community. He has made significant contributions to fundamental problems in Computer Vision, and his main research focus is on applications of Artificial Intelligence for Social Good.